Radical Management Gathering

27-02-2011Success Factors in a Scrum Sprint

14-03-2011Last November, I started a quick poll on Story Size, Team Size, and Sprint Success. I wanted to explore that variables of team size, sprint duration, average and maximum story size, and whether there is any correlation between between these parameters and team success.

I received 81 responses (presumably from 81 different people and hopefully from 81 different teams, but I have no way of checking this). 78 claimed to be doing Scrum, one claimed XP, and two did not reveal their preference. This article presents a first look at the results.

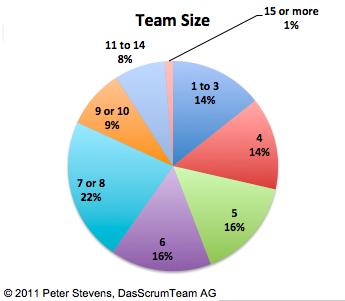

Team Size

Scrum recommends an Implementation Team size of 7+/-2, so 5 to 9. Based on the poll, 63% of the respondents are in the range of 5 to 10 (should have sized the response blocks differently!). 28% have 1 to 4 people and 9% have 11 of more.

Sprint Length

These results were something of a surprise to me. More than half (63%) of all respondents indicated they did two week sprints. Only 1/3 that number reported 3 week sprints. In May 2008, I ran a quick poll on sprint length, and the answer came up even: almost exactly 1/3rd each were doing 2 and 3 week sprints, another third where spread around 1 week, 4 week, 1 month and variable.

Obviously the poll tells us that something has changed, but not why this change has happened. And in all honesty, I am not sure this represents a real change. The previous poll had only 34 respondents and even 81 is not a huge number.

On the other hand, the influence of XP on the Scrum community has grown, there has been a lot of discussion on “Scrum-But” since my original poll on the Nokia Test, and queuing theory tells us that shorter sprints with more stories per sprint should be more predicatable.

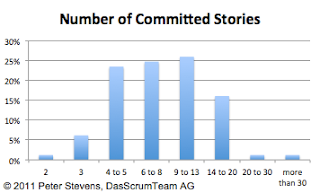

Number of Stories per Sprint

In my trainings, I recommend teams to take on at least 10 stories per sprint. This means that the average story size is around 10% of the team’s capacity. What do teams actually do? 74% of all teams take on between 4 and 13 stories per sprint, which puts the average around 7 or 8 and the average story size around 12% to 15% of the team’s capacity.

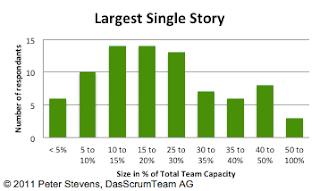

Largest Single Story

During my first Scrum Project as ScrumMaster, I made the observation that if my team took on a story that was 40% of their total capacity for the sprint, their chances of not completing the story were, uh, “excellent.” In the mean time, I have conversations with people who suggest 25% is better maximum. I was surprised to see that there are teams out there who will take on a story that represent 50% to 100% of the team’s capacity for the sprint!

According to this data, 54% or all teams insist on stories <= 20% of their capacity, and 79% insist or 35% or less.

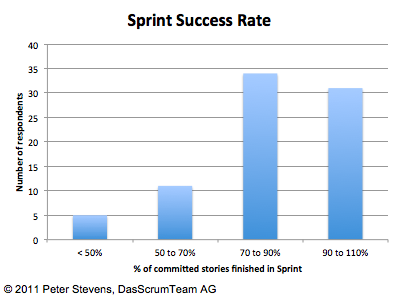

Success rate

Success is a tricky thing to measure. For this poll, I define success to mean, ‘the team delivers that which it commits to.’ This does not say anything about the productivity of the team. I once saw a 12 person team double its performance in story points after being split into two 6-person teams. But the team had been delivering what it promised, so by this definition it was a successful team.

I choose this measure for success for two reasons. 1) Delivering on commitments is a fundamental skill of a Scrum team. It builds trust between Implementation Team and Product Owner. 2) It is relatively easy (uh, possible) to measure in this context (“the light’s better“).

Looking at this graph, you might get the impression that a 70 to 90% success rate is OK. I don’t think so. The goal is to be a reliable partner. Yes, a team needs the safety to fail once in a while, especially when they just start out with Scrum, and if you focus too much on the goal of certainty, then other things, like performance and creativity, may suffer.

Scrum is Hard to do Well

So I would interpret this data differently. There are teams that are doing well, “successful” and those that need improvement:

…but I think we’re getting better!

It looks like 38% are doing pretty good Scrum, delivering on their commitments, and 62% could be doing better. This compares favorably with the 76% of all respondents who scored 7 or fewer points in the 2008 Poll on the minimal Nokia Test.

The interesting question is what are the signs of a good (or bad) Scrum team? I have been playing with Pivot Sheets and will post some analysis of the relationships between the various factors. (And if anyone is good with statistics and/or has access to better tools than Excel for this purpose, your help would be most welcome!).

Update 6.3.11: Here is the original Poll and the actual data in spreadsheet form. (Don’t use google’s graphical summary of the results. For reasons I do not understand it is not correct).

2 Comments

I don't think that between 70 and 90% is bad. Yes one goal is to be predictable.

I had coached a team that made their sprints all the time. 3 other teams in the same organisation did not. Non of them used Burndown charts at that time. When we introduced burndown charts, we had some discussion if it was wurth using the burndown chart for the "good" team.

It was decided they would too.

The burndown chart showed that there was a big problem with the so called good team.

They had almost nothing done, untill the very last day.

This team was calling things finished, that weer in reality not finished (resulting in bad quality.)

I had another team that was always above 100%. This team always under committed. They did this because they received large bonusses for arriving at 100%.

Hi Yves,

I am totally with you that this is a very superficial definition of success.

I believe measurements of team performance can useful for helping the team understand its performance. They are worthless for 'driving' performance, setting targets, or comparing teams. The measurements are easily manipulated and may ignore important aspects, such as quality or exertion, as you correctly point out.

I don't think a team should regularly over-commit. It means the team does not understand its capacity or is being put/is putting itself under too much pressure.

So while I would never even suggest punishing a team for not meeting its commitment, as a coach, I would encourage a team to try to hit its commitment +/- 10% most of the time.

Cheers,

Peter